|

| NVIDIA CEO Jensen Huang visits Samsung Electronics’ booth at GTC and signs AI chip wafers, highlighting their partnership. / Samsung Electronics |

NVIDIA has expanded its partnership with Samsung Electronics by entrusting it with the production of a next-generation AI inference chip, signaling a deepening alliance that now extends beyond memory into advanced foundry manufacturing.

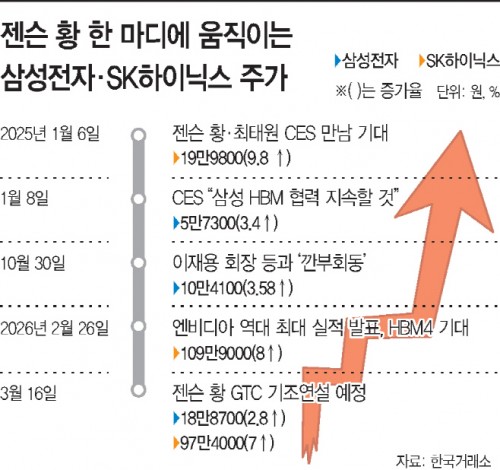

Speaking at the GTC keynote, Jensen Huang said, “Samsung is manufacturing the ‘Groq 3 LPU’ chip for us. We are ramping up production as fast as possible. Thank you, Samsung.”

The Groq 3 LPU is designed for AI inference tasks and is being manufactured at Samsung’s foundry using advanced 4-nanometer processes. The chip is expected to begin shipping in the third quarter, with production ramping up rapidly.

Groq, an AI chip startup backed by NVIDIA with a $20 billion investment, focuses on language inference performance. Unlike conventional AI chips, its architecture minimizes latency by integrating SRAM and avoiding reliance on high-bandwidth memory (HBM), which has recently faced supply constraints.

While TSMC remains NVIDIA’s primary foundry partner, the latest move highlights Samsung’s growing role in AI chip manufacturing.

Industry analysts say this marks a significant milestone for Samsung’s foundry business, as it secures a key position in producing critical AI system semiconductors—not just memory.

The collaboration builds on an already expanding partnership. Samsung has previously manufactured NVIDIA’s robotics processors, including its Jetson platform, and joined the NVLink Fusion ecosystem, which enables customized AI chip connectivity.

Samsung also used the GTC event to highlight its latest memory technologies, including HBM4 and next-generation HBM4E, aiming to reclaim leadership in the high-bandwidth memory market.

The company demonstrated a full AI system configuration featuring NVIDIA’s next-generation Vera Rubin platform, integrating HBM, low-power DRAM modules (SOCAMM2), and high-performance SSDs.

This positions Samsung as a rare integrated device manufacturer capable of delivering a complete “memory-to-logic” solution for AI infrastructure.

The collaboration between NVIDIA and Samsung dates back more than two decades, beginning with SDRAM supply for NVIDIA GPUs in the late 1990s. It has since evolved through multiple generations of HBM development.

Analysts note that as AI performance increasingly depends on the integration of compute chips, memory, and advanced packaging, Samsung’s broad semiconductor capabilities could become a key competitive advantage.

The latest development suggests that the NVIDIA-Samsung partnership is entering a new phase—one that could reshape competition in the global AI semiconductor supply chain.

#Samsung Electronics #NVIDIA #Jensen Huang #AI chips #foundry

Copyright by Asiatoday

Most Read

-

1

-

2

-

3

-

4

-

5

-

6

-

7